Mar 04, 2020 This guide will take you through understanding HTML web pages, building a web scraper using Python, and creating a DataFrame with pandas. It'll cover data quality, data cleaning, and data-type conversion — entirely step by step and with instructions, code, and explanations on how every piece of it works. The incredible amount of data on the Internet is a rich resource for any field of research or personal interest. To effectively harvest that data, you'll need to become skilled at web scraping.The Python libraries requests and Beautiful Soup are powerful tools for the job. If you like to learn with hands-on examples and you have a basic understanding of Python.

Build A Web Scraper Python

It is a well-known fact that Python is one of the most popular programming languages for data mining and Web Scraping. There are tons of libraries and niche scrapers around the community, but we'd like to share the 5 most popular of them.

Most of these libraries' advantages can be received by using our API and some of these libraries can be used in stack with it.

The Top 5 Python Web Scraping Libraries in 2020#

1. Requests#

Well known library for most of the Python developers as a fundamental tool to get raw HTML data from web resources.

To install the library just execute the following PyPI command in your command prompt or Terminal:

After this you can check installation using REPL:

- Official docs URL: https://requests.readthedocs.io/en/latest/

- GitHub repository: https://github.com/psf/requests

2. LXML#

When we're talking about the speed and parsing of the HTML we should keep in mind this great library called LXML. This is a real champion in HTML and XML parsing while Web Scraping, so the software based on LXML can be used for scraping of frequently-changing pages like gambling sites that provide odds for live events.

To install the library just execute the following PyPI command in your command prompt or Terminal:

The LXML Toolkit is a really powerful instrument and the whole functionality can't be described in just a few words, so the following links might be very useful:

- Official docs URL: https://lxml.de/index.html#documentation

- GitHub repository: https://github.com/lxml/lxml/

3. BeautifulSoup#

Probably 80% of all the Python Web Scraping tutorials on the Internet uses the BeautifulSoup4 library as a simple tool for dealing with retrieved HTML in the most human-preferable way. Selectors, attributes, DOM-tree, and much more. Adobe indesign adobe. The perfect choice for porting code to or from Javascript's Cheerio or jQuery.

To install this library just execute the following PyPI command in your command prompt or Terminal:

As it was mentioned before, there are a bunch of tutorials around the Internet about BeautifulSoup4 usage, so do not hesitate to Google it!

- Official docs URL: https://www.crummy.com/software/BeautifulSoup/bs4/doc/

- Launchpad repository: https://code.launchpad.net/~leonardr/beautifulsoup/bs4

4. Selenium#

Selenium is the most popular Web Driver that has a lot of wrappers suitable for most programming languages. Quality Assurance engineers, automation specialists, developers, data scientists - all of them at least once used this perfect tool. For the Web Scraping it's like a Swiss Army knife - there are no additional libraries needed because any action can be performed with a browser like a real user: page opening, button click, form filling, Captcha resolving, and much more.

To install this library just execute the following PyPI command in your command prompt or Terminal:

Obsidian diamond. The code below describes how easy Web Crawling can be started with using Selenium:

As this example only illustrates 1% of the Selenium power, we'd like to offer of following useful links:

- Official docs URL: https://selenium-python.readthedocs.io/

- GitHub repository: https://github.com/SeleniumHQ/selenium

5. Scrapy#

Scrapy is the greatest Web Scraping framework, and it was developed by a team with a lot of enterprise scraping experience. The software created on top of this library can be a crawler, scraper, and data extractor or even all this together.

To install this library just execute the following PyPI command in your command prompt or Terminal:

We definitely suggest you start with a tutorial to know more about this piece of gold: https://docs.scrapy.org/en/latest/intro/tutorial.html

As usual, the useful links are below:

- Official docs URL: https://docs.scrapy.org/en/latest/index.html

- GitHub repository: https://github.com/scrapy/scrapy

What web scraping library to use?#

So, it's all up to you and up to the task you're trying to resolve, but always remember to read the Privacy Policy and Terms of the site you're scraping 😉.

I've recently had to perform some web scraping from a site that required login.It wasn't very straight forward as I expected so I've decided to write a tutorial for it.

For this tutorial we will scrape a list of projects from our bitbucket account.

The code from this tutorial can be found on my Github.

We will perform the following steps:

To install this library just execute the following PyPI command in your command prompt or Terminal:

Obsidian diamond. The code below describes how easy Web Crawling can be started with using Selenium:

As this example only illustrates 1% of the Selenium power, we'd like to offer of following useful links:

- Official docs URL: https://selenium-python.readthedocs.io/

- GitHub repository: https://github.com/SeleniumHQ/selenium

5. Scrapy#

Scrapy is the greatest Web Scraping framework, and it was developed by a team with a lot of enterprise scraping experience. The software created on top of this library can be a crawler, scraper, and data extractor or even all this together.

To install this library just execute the following PyPI command in your command prompt or Terminal:

We definitely suggest you start with a tutorial to know more about this piece of gold: https://docs.scrapy.org/en/latest/intro/tutorial.html

As usual, the useful links are below:

- Official docs URL: https://docs.scrapy.org/en/latest/index.html

- GitHub repository: https://github.com/scrapy/scrapy

What web scraping library to use?#

So, it's all up to you and up to the task you're trying to resolve, but always remember to read the Privacy Policy and Terms of the site you're scraping 😉.

I've recently had to perform some web scraping from a site that required login.It wasn't very straight forward as I expected so I've decided to write a tutorial for it.

For this tutorial we will scrape a list of projects from our bitbucket account.

The code from this tutorial can be found on my Github.

We will perform the following steps:

- Extract the details that we need for the login

- Perform login to the site

- Scrape the required data

For this tutorial, I've used the following packages (can be found in the requirements.txt):

Open the login page

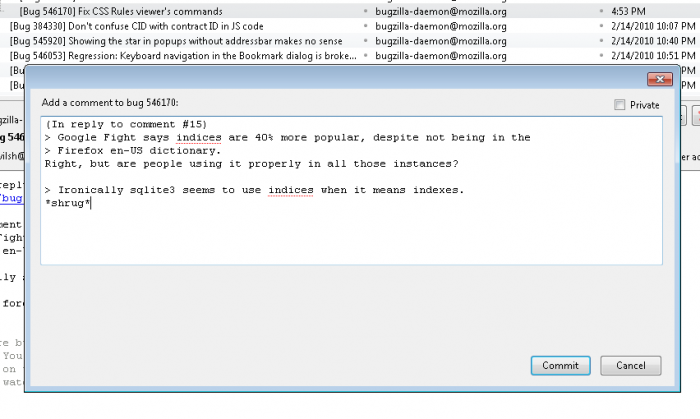

Go to the following page 'bitbucket.org/account/signin' .You will see the following page (perform logout in case you're already logged in)

Check the details that we need to extract in order to login

In this section we will build a dictionary that will hold our details for performing login:

- Right click on the 'Username or email' field and select 'inspect element'. We will use the value of the 'name' attribue for this input which is 'username'. 'username' will be the key and our user name / email will be the value (on other sites this might be 'email', 'user_name', 'login', etc.).

- Right click on the 'Password' field and select 'inspect element'. In the script we will need to use the value of the 'name' attribue for this input which is 'password'. 'password' will be the key in the dictionary and our password will be the value (on other sites this might be 'user_password', 'login_password', 'pwd', etc.).

- In the page source, search for a hidden input tag called 'csrfmiddlewaretoken'. 'csrfmiddlewaretoken' will be the key and value will be the hidden input value (on other sites this might be a hidden input with the name 'csrf_token', 'authentication_token', etc.). For example 'Vy00PE3Ra6aISwKBrPn72SFml00IcUV8'.

We will end up with a dict that will look like this:

Keep in mind that this is the specific case for this site. While this login form is simple, other sites might require us to check the request log of the browser and find the relevant keys and values that we should use for the login step.

For this script we will only need to import the following:

First, we would like to create our session object. This object will allow us to persist the login session across all our requests.

Python 3 Web Scraping

Second, we would like to extract the csrf token from the web page, this token is used during login.For this example we are using lxml and xpath, we could have used regular expression or any other method that will extract this data.

** More about xpath and lxml can be found here.

Next, we would like to perform the login phase.In this phase, we send a POST request to the login url. We use the payload that we created in the previous step as the data.We also use a header for the request and add a referer key to it for the same url.

Now, that we were able to successfully login, we will perform the actual scraping from bitbucket dashboard page

In order to test this, let's scrape the list of projects from the bitbucket dashboard page.Again, we will use xpath to find the target elements and print out the results. If everything went OK, the output should be the list of buckets / project that are in your bitbucket account.

Python Web Scraper Selenium

You can also validate the requests results by checking the returned status code from each request.It won't always let you know that the login phase was successful but it can be used as an indicator.

for example:

Python Web Scraper Library

That's it.

Python Web Scrapers Download

Full code sample can be found on Github.